As you may have read, we are setting up a new frontend platform of La Redoute website. Based on experiences we have with the existing one, it was a nice opportunity to rethink how we do many things like: data fetching, bundling or the analytics.

Like every modern e-commerce website, data analytics is essential to help business find efficiencies, discover opportunities, and eventually solve unknown problems.

This post aims to share the approach we had to rethink our tracking methods with the help of concepts brought by one modern UI library like React, the one we chose to implement the new stack basements.

Existing pain points

While preparing the subject, we needed to collect the different pain points existing when we have a new tracking development to operate on the previous stack.

To illustrate it, the common scenario looks like this:

- Page is initially rendered with a first data layer stored in a global variable

- Once the page is ready, the analytics provider uses the initial data layer for its event “Page ready”

- A user interacts with the website (e.g.: click on a button). And as an event handler was attached to the DOM element related to the button, its callback is triggered

- On callback, the global data layer variable is updated with some new fields corresponding to variables of the tracking plan specific to the provider

- The analytics provider observes the changes on the global variable.

Unpredictable

Hence the current data layer is one global variable updated after each tracked user interaction but not reset.

But the legacy website has not a clearly defined component tree (like many 2000-2010s websites essentially built in jQuery and vanilla JS). Consequently, we are not able to update the data layer with the right event information only as we can just add/update the current event fields into the global data layer.

So, the data sent to the analytics provider is not really predictable for one triggered event, as it can include data from previous events.

Too “analytics-oriented” data layer

The data layer fields designate sometimes some conversion variables like for example evar31. That has been thought “analytics first”, but it leads to something unclear for maintainers.

Actually, it also makes the presentation layer tightly coupled to the analytics provider where the events are dispatched, something we should avoid.

DOM dependent

Some user interactions are tracked based on DOM selectors, contrary to what we expect from a clean component, which should have no idea of where it is being rendered.

For example, a tracked submit action on one form should be reusable on many pages without being aware of its location in the app.

Target

The sustainability of one implementation depends on common clean code principles and things like strong type checking with Typescript set up on the new frontend stack. But regarding the flaws of the existing implementation, we wanted our tracking solution to follow the next specific criteria:

Context with React

We saw that one thing we want to prevent is data “leaking” across the entire app, thus we need to separate tracking concerns by components.

The frontend ecosystem evolved naturally to provide component-based framework like React on which the new platform relies on.

If we need to keep one essential concept brought by React, that is its virtual DOM. This concept allows to have a virtual representation of the DOM, that’s to say for one component, its UI updates, event handling, and data bindings are handled behind the scenes by the library.

And above this abstraction helping developer experience, the library forces to have a component architecture, in such way that we have no choice to have a clear application components hierarchy.

On React 16 , a new essential has been released in its ecosystem: the React context.

It allows to pass down and consume data in whatever component without using props drilling on components, so that we can share data across components more easily.

![]()

Quickly, a context is defined by two things:

- the context provider, a component propagating data to all its children

- the context consumer, a component subscribing to any change applied to data provided by top-level

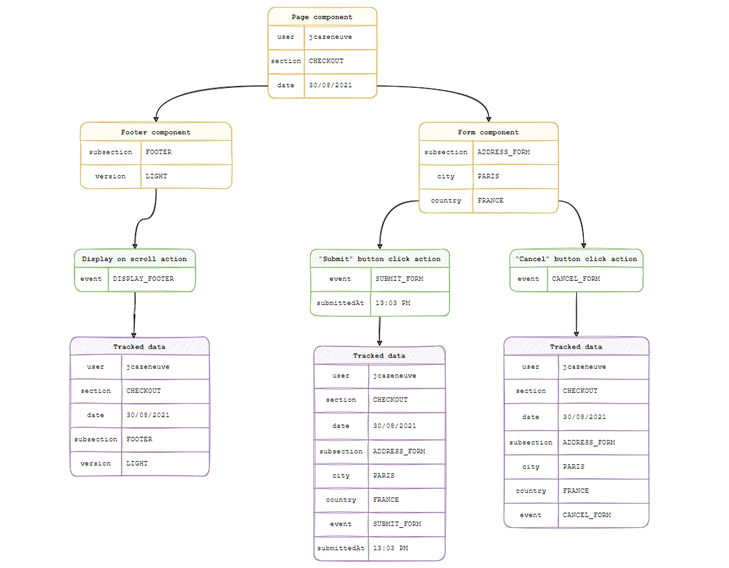

We can also define nested contexts. In this way we’re able to build a tracking library which can trigger events with a data iteratively built from where the user activity occurs.

Example:

- On the Root component, we have a top-level tracking provider with initial page data

- We define a Component1 with its own data, which is a consumer from root context but acts as a tracking provider also for its descendants

- We define a Component2 which has a button whose click is tracked and has a payload data representing the current date

- When triggering the button click event, the process will deeply merge data from the event payload to the Root component data, including Component1 data.

Analytics provider agnosticity

By thinking from the start our tracking process as agnostic from the analytics provider, we constraint us to not have provider specific code at component level as we have today with a 3rd party service like Adobe Analytics.

To do this, we managed to have a shareable logic operating all the tracking process (i.e.: gathering the data & dispatching it). It is handled by a React hook called useTracking which can be simply used in any React component.

Briefly, a React hook is a concept allowing to define functions able to manage component state outside the component and reuse it.

![]()

After the trackEvent hook method is called, it automatically triggers a dispatcher.

The dispatcher is defined on the root app component, and is called via the context mechanism described above.

In our case, the dispatcher defined on top-level by default is leading to Adobe Analytics provider. But it’s easily overridable tomorrow if we want to use another provider (in complement or not).

Declarativity

The idea of being declarative is simply to express our tracking events as they present themself.

You could see in the previous paragraph that via our React hook in particular, the tracking action is developed as we would state it like “in my form, on button click, I want to track a submit event”.

But over that programming principle, the idea here is that instead of defining variables for conversion like prop24 or var52, we try to be as straight and expressive as possible in the data layer and represent business as it is.

So, if my event is a form submit on an account creation page for the Italy, I want my data layer to basically look like:

![]()

And with the contextual computing described above, we ensure that the data layer is only up to date with the last triggered event. So my tracking data is declarative and predictable for one event.

And once it has been dispatched to the analytics provider, it’s up to this one to map this data correctly to integrate correctly its system for its tracking plan.

In conclusion

Being experienced since few months, this looks to be effective and easing the implementation of new events.

Added to the adopted contextual strategy, one game changer is clearly to be able to express a more business-oriented data layer. About this, one of the points of attention for the future is to ensure having collaboration and review on tracking plans established with analytics team in order to benefit from the reusability of the strategy, by not redefining data already tracked.

In this same perspective, we have today only one app (the Checkout part), but by heading towards many micro-frontend apps for each important pages of the website, we’ll need to verify that we have only one root tracking provider with consistent shared data.

Also, by having a free implementation of the tracking dispatcher, we’re not only not strongly coupled to any 3rd party service, but not dependent too from any data analytics strategy we could adopt in the future like 1st party data capture.

The foundations have been laid, the next step to make it a success will be this cooperation between feature dev teams and team owning analytics business to keep plans as simple as possible.