Contributors : Nadia Tennich and Telmo Miranda

Mobile first and new business challenge

2020 was the biggest year for mobile shopping on record. More than 115 billion dollars were spent worldwide on online shopping platforms during this year between November 1th and November 11th.

To speed up innovation and increase our business on Redoute mobile application, ensuring a best time-to-market, our team is working, researching, scoping, designing and improving process of all digital aspects from customer acquisition to conversion and loyalty.

3 Years ago …

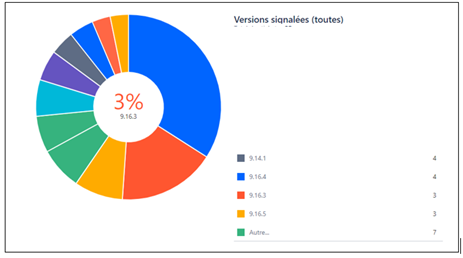

3 years ago we wanted to accelerate the release frequency and we had many app versions to test. It led us having a lot of regressions.

On each version we have on average between 2 and 3 blocking bugs reported by the NRTs and blocking the release.

Following this rapid release cycle of mobile innovation, we are working on two aspects:

• Team organization between QA and developers

• a Mobile farm for automated testing.

In the first step, we are working continuously on the process and the organization to combine functional testing and automation to discover bugs and improve overall app quality.

We have integrated the automation QA within a feature team to have a clear vision concerning the scope we want to manage.

In a second step, we have implemented our bespoke application farm to be quicker and to run our non-regression campaign.

The objective was to check the non regression of the new application every 2 months and check the daily release for APIs.

We will go through the current release process detailing the delivery as well as the testing context to highlight the pain points of release management.

Current release process:

Delivery context:

Currently we have only one branch with major release. This release is delivered every two months and integrate more than 200 tickets (features, bug, technical issue etc.)

The release is very risky and slow down delivery rate with the current git process.

On the same git branch, we merge a lot of features with different status and sometimes one blocking feature can block the whole release and generate regressions and fix versions.

Testing context:

Today, the APP releases are quite huge (with more than 200 ticket pushed). The current process increases the risk of errors and slows down the delivery rate (one small regression can block the entire release).

This could generate a series of fixes for the ongoing release that is very time consuming as every test needs to be re-run on each commit, and we only had one device farm to run all the tests.

We had only one mobile Farm to handle all the application tests: full campaign during the web release to check if web services do not add any regression to the application, tracking campaigns, deep link campaign and the new application version to be delivered to the store.

New release process for mobile applications

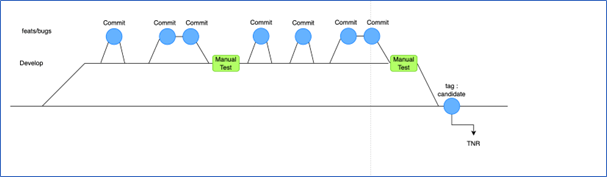

To decrease delivery time, the new release process will have less ticket pushed per version and will be much more frequent.

It defines a stable version where the features will be added and validated and, as soon they are ready, they will be committed to a candidate version (store ready). Then, the automated tests will be triggered.

Continuous testing approach on mobile application:

Mobile application is under pressure to be more and more thorough in their quality assurance of products, and to deliver that quality at speed.

The way to improve quality assurance (QA) is to fail fast.

The purpose of failing fast is to catch and fix a bug as close to its origin as possible, before it makes its way further down the release pipeline.

Because it’s much easier and less expensive to fix an error in the context of which it was created, it’s always preferable for an error not to change hands.

We noticed in the lasts years the CI/CD approach has been perfected in the domain of web development but for more mobile applications it is a little bit complicated.

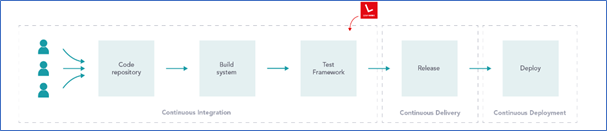

If we analyze the CI/CD pipeline parts:

Ref : https://www.leapwork.com/blog/continuous-testing-in-devops

Continuous Integration: In continuous integration, source code changes are frequent and rapidly turned into software release candidates.

This way we ensure sufficient quality on the software, a subsequent integration, we get in addition rapid feedback on changes and software quality for developers.

This allow more frequent software release candidates for production.

Automated Testing: This is an area of rapidly evolving technology where new and better solutions are coming up. More manual steps are being automated with increasing precision and reliability.

Continuous Deployment/Delivery: Due to the nature of mobile app distribution, it is impossible to automate the deployment part in all scenarios completely.

Depending on the business use case and the platform, an app can be distributed in several ways.

App Stores have been the most popular and public way of distributing applications. Submitting apps to the app store has been a manual process involving manual verification steps.

This is where the delivery part cannot usually be automated and requires manual intervention.

How quality support new process :

How to improve the CI/CD to ensure a frequent delivery ?

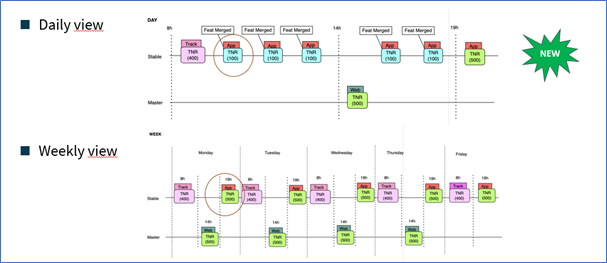

There will be a small campaign (around 100 executions) triggered on each commit to the stable version. This would allow developers to have a quick understanding of the current application status regarding regression.

At the end of the day (7PM), a full campaign is triggered so that the developers on the next day, have a full vision of the application NRT campaigns.

During the day (around 2PM), we trigger the full application campaign to validate the associated API release as described in the image below:

Why adding a second farm ?

To handle all automated executions and to guarantee devices availability to cope with this new testing process, it was decided to have a second phone farm.

With this new testing process and a dedicated new farm, we are able to deliver much faster and with less regression new versions to the stores (Android and iOS)

The purpose is to give the developers a quick vision of the quality of the code while they commit several times per day.

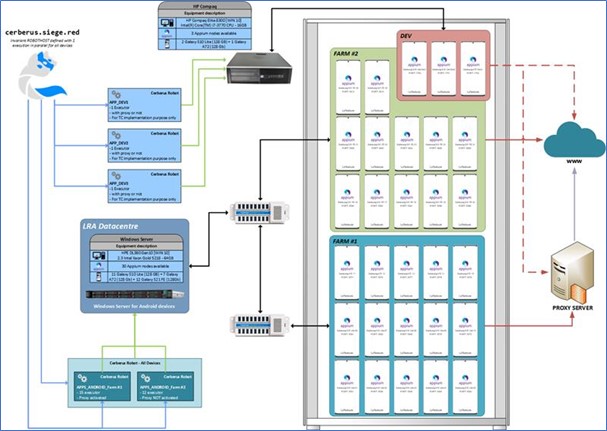

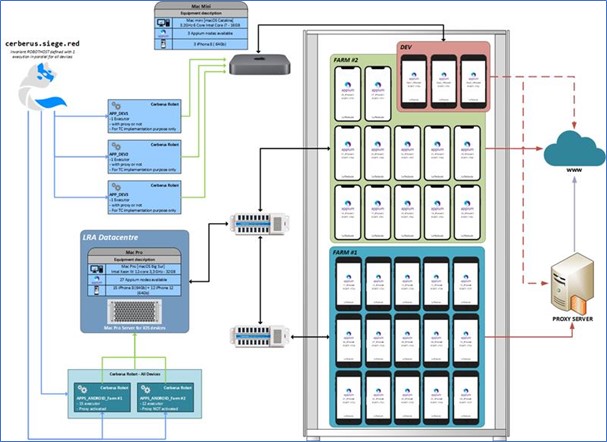

The Architecture of internal Mobile Device Farms

Below is a detailed architecture of how our mobile device farms look like, according to the detailed setup described in this post.

Android Stack:

For this new farm we bought for Android stack 12 Samsung S21 FE.

Here is the technical solution in place that we will describe in the following sections.

On Android stack we had until now 15 Android devices (10 Samsung 10 Lite + 5 Samsung A72) connected to a specialized hub (with power supply management and data transfer) connected to Windows Server.

Those Android devices are connected to a proxy. the aim of a proxies to register the traffic that the application generates that can be checked on non-regression tracking variables.

On the second farm we now have 12 Android devices (12 Samsung 21 FE) connected to another dedicated hub (hubs are currently connected in series) this is connected at the same Windows Server (server was scaled and designed to handle the load at the time of purchase). The devices are not connected to the proxy since the tests does not requires it.

There are also 3 dedicated devices connected (that can have proxy definitions set or not depending on the usage) to another window server dedicated to implement new test or to validate software version upgrade (Apium, Android SDK, …).

iOS stack:

For the iOS stack we also bought 12 new devices (12 iPhone12) a new Mac Server.

Here is the technical solution in place that is very similar to the android one will describe in the following sections.

With the addition of the new devices, current mac mini was not able to handle the load so it was necessary to purchase a new server that could handle both farm load.

As the structure was updated to be like the Android one.

A set of devices are connected to a specialized hub and have a proxy defined with the aim to register the traffic that the application generates and can be checked on non-regression tracking variables.

The new devices are connected to another specialized hub (also connected in series) and have no proxy configured since the tests does not requires it.

Same as the Android structures, there are also 3 dedicated devices (that can have proxy definitions set or not depending on the usage) connected to a mac mini server dedicated to implement new test or to validate software version upgrade (Apium, XCode, …).

Currently both main servers are in LRA local datacenter.

What have we learnt ?

Working with real mobile devices can be challenging. The number of those to be maintained, upgraded and basically monitored.

Also, while using devices for mobile app testing, there are sometimes OS-level notifications about the battery, OS updates, update wifi…. So, the devices need a lot of attention, currently we are working with Intunes to have massive updates and specific configuration.

- We are fast to provide relevant and quick feedbackson changes and software quality to developers.

- The level of autonomy and empowerment given, enable them to iterate with much more velocity and make decisions to ensure the quality at speed of their products.

- We rely on a well-integrated process, thanks to the incorporation of the CI/CD pipeline and the continuous testing on our platform.

we are based on a cross team quality driven process: it makes work efficient and more collaborative by sharing cross-functionalities between all our teams and maintaining/upgrading the huge infrastructure